I think crowning any particular product from that time the first of anything ends up ultimately being a sort of epistemological dead end. What is fascinating to me is that this period, roughly 1974-77, was like Florence in the Renaissance for this stuff. Serious advances were being measured in months or even weeks and there was so much that was just "in the air" and being hashed out at club meetings and by mail and phone between excited engineers, enthusiasts, and entrepreneurs. No one really knew what the future was going to look like as it was being shaped, and so many interesting ideas popped up all over the place.

Yes. And what's really annoying about this pointless exercise is that some of the people that get really invested in some particular candidate (which is a really common disease among those pushing the Apple-1, sorry, gotta call it out) just completely miss through their willful ignorance is how insulting it is to just discard and denigrate the efforts of all those other people working in the field in their drive to crown a winner. There was a positively Biblical-level flood of technical innovation going on in the computer technology field in the early 1970's and every drop of it it raised all boats together. It is seriously not okay to just arbitrarily give the crown to somebody based on a completely made-up set of arbitrary criteria like "

oh, that one has two circuit boards instead of one, NOT A PERSONAL COMPUTER".

And the other thing that really ticks me off about this is all the pretending that this paradigm of "a machine you can use just sitting down at a keyboard and looking at a screen" was invented by microcomputers at all, let alone by Steve Wozniak with the Apple-1. (I've noted before how, unfortunately, Woz's autobiography, is a serious offender here. If you took it as your only source you'd not only believe that he invented the first video screen connected to a microprocessor, you could be forgiven for coming away with the impression that it was Woz that actually was the one that figured out that microprocessors could be used to power real-time interactive computers, period. I mean, seriously, parts of the book read like he thinks that the manufacturers of these chips started churning them out after they found the plans in a green meteorite that also had a suspiciously burly baby in it, with no idea what they had on their hands.) The idea of a computer that had an interactive terminal and was at the beck and call of a single individual dates back in prototype form to the early 1950's. There are so many machines going back to at least the mid-1960's that are better "personal computers" than the Apple I by any number of measures, the only disqualifications are size and price, And of course those are both completely subjective criteria.

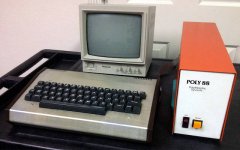

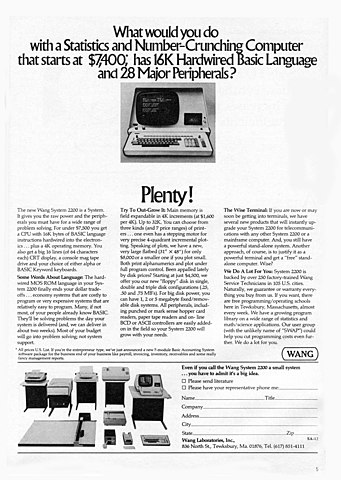

At a superficial level the only thing distinguishing 1973's Wang 2200 from 1977's TRS-80 Model I is a roughly 10x spread in purchase price:

Obviously that's a pretty big deal, it's the difference between a nice used car and a badly used house, and technically one is implemented with a single-chip microprocessor instead of a wad of medium-integration ICs, but the point is that everybody knew where they were going with this. It was all a matter of making it smaller and cheaper. Nobody gets to wear the "inventor" crown here, sorry.

I do think it's interesting to e.g. consider the Apple 1's weirdly succinct ~12 page manual. At a time when micro systems all came with huge xeroxed binders stuffed with data sheets and theories of operation, I wonder whether the mass-market friendliness that the company eventually became known for was peeking through even then.

That's an... interesting take on "friendliness", there. It's certainly thinner than the manuals that would come with systems where the option existed to solder up the PCBs from a bare kit, but, well... it kind of goes without saying that you could skimp on a fair amount of the theory of operation necessary to bootstrap the machine from a bare pile of circuits if the assumption is somebody did all that testing before it went out the door. The manual nonetheless has schematics on half the pages, and the "usage instructions", such as they are, consist of two pages of machine language monitor syntax, two and a half pages of an assembly language listing of the ROM, and a "HOW TO EXPAND THE SYSTEM" page that would be complete gibberish to someone who wasn't pretty well versed in 6502/6800 hardware theory. In short, "succinct" could very much be a synonym for "incomplete".

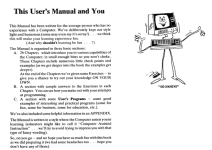

This is what a friendly user manual looks like. We might laugh at how goofy and simplistic this is to our modern jaded eyes, but if we think the definition of "personal computer" implies a machine usable (inside of a reasonably short learning curve) by someone with qualifications little beyond a decent grasp of English and basic mathematics the humble Trash-80 has a

really strong claim thanks to its manual alone.

I mean, seriously, let's get real here, on the Apple I you not only had to load BASIC from a tape, all you had from power-on was this minimal monitor,

Apple I BASIC doesn't even have save and load commands! There's "succinct" and then there's "unfinished"...