(Preamble: to most of you, this will seem like OCD nitpicking of the worst kind, but it ain't so. Fact is, I'm working on a graphical project which targets the IBM Color Display, and accurate colors become important for the artwork, especially when you factor in things like dithering.)

Some of you are familiar with the commonly-accepted mapping of RGBI CGA colors to 24-bit (s)RGB values. This "canonical" palette assigns a level of 2/3 to the original R,G,B components, treats I (intensity) as an additional 1/3 in all three channels, and implements the "brown fix" by halving G for color #6:

"Canonical" RGBI -> rgb24 palette (all values are in RGB hex triads)

However, this doesn't necessarily reflect the appearance of a CGA monitor like the 5153. Rather, it's derived from the way *VGA* rendered these 16 colors for backward compatibility - it's simply expanded from VGA's 6 bits per channel to 8.

It's canonical in the sense that it follows exactly what IBM did with VGA. But since I got my 5153, I've become convinced that this isn't a good enough model for the colors' original RGBI appearance. At least with *my* 5153, no amount of fiddling with the controls really gets the output to match that palette perceptually.

So, is it possible to get a better representation?

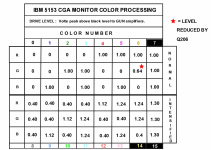

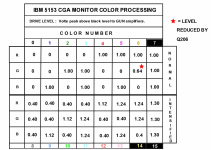

T-squared's recent Sanyo thread reminded me of Hugo Holden's excellent investigations of the 5153's guts. In Using an IBM EGA card in the IBM 5155 (see https://www.worldphaco.com/), pp. 21-24 present his measurement of the voltages driving the gun amplifiers for each RGBI color.

Here's the relevant chart (I hope he doesn't mind me reproducing it here):

These measured drive levels deviate from expected values in a number of interesting ways, which the article goes into. But for me, it's even more interesting to try deriving a more accurate palette based on these results. At least, they give us a much more realistic starting point.

Normalizing the voltage range of 0--1.30 to the rgb24 range of 0--255 gets me this:

RGBI to rgb24, as derived from Hugo Holden's 5153 gun drive level measurements

Things to note:

This mapping already looks a lot closer to reality. But does it really tell the full story? I'm not sure.

Here's a photo of the 16 colors on my 5153. I've tried to make sure that the camera settings reflect what I'm seeing as closely as possible, and to correct and white-balance the image so that gray is really gray.

Even so it's not perfect, but it gets kinda-sorta close enough:

One thing you may notice is, the contribution of each primary color to overall luminance seems to be somewhat different than expected.

For example: in my derived colormap, #2 and #3 (green and cyan) are very close in brightness. Same goes for #4 and #5 (red and magenta). But on my monitor, both of those pairs show a more noticeable difference.

It's as if blue contributes more than expected to the luminance, and/or red/green contribute less. Interestingly this doesn't have a huge effect on hue... although the magentas do appear slightly more bluish than they do in my derived palette. I think. However, anything I can come up with at this point is strictly subjective.

So if Hugo Holden is reading this, or for that matter anyone else with the right knowledge...

- Is my above mapping valid?

- Can it be fine-tuned even further, based on any published information or measurable data?

- Can I even trust what I'm seeing on my monitor, or should I just ignore all those differences and chalk them down to aging components and so on?

Some of you are familiar with the commonly-accepted mapping of RGBI CGA colors to 24-bit (s)RGB values. This "canonical" palette assigns a level of 2/3 to the original R,G,B components, treats I (intensity) as an additional 1/3 in all three channels, and implements the "brown fix" by halving G for color #6:

"Canonical" RGBI -> rgb24 palette (all values are in RGB hex triads)

However, this doesn't necessarily reflect the appearance of a CGA monitor like the 5153. Rather, it's derived from the way *VGA* rendered these 16 colors for backward compatibility - it's simply expanded from VGA's 6 bits per channel to 8.

It's canonical in the sense that it follows exactly what IBM did with VGA. But since I got my 5153, I've become convinced that this isn't a good enough model for the colors' original RGBI appearance. At least with *my* 5153, no amount of fiddling with the controls really gets the output to match that palette perceptually.

So, is it possible to get a better representation?

T-squared's recent Sanyo thread reminded me of Hugo Holden's excellent investigations of the 5153's guts. In Using an IBM EGA card in the IBM 5155 (see https://www.worldphaco.com/), pp. 21-24 present his measurement of the voltages driving the gun amplifiers for each RGBI color.

Here's the relevant chart (I hope he doesn't mind me reproducing it here):

These measured drive levels deviate from expected values in a number of interesting ways, which the article goes into. But for me, it's even more interesting to try deriving a more accurate palette based on these results. At least, they give us a much more realistic starting point.

Normalizing the voltage range of 0--1.30 to the rgb24 range of 0--255 gets me this:

RGBI to rgb24, as derived from Hugo Holden's 5153 gun drive level measurements

Things to note:

- The levels were measured after the input processing stage, and I'm assuming that they don't require any further gamma correction.

That can't be true unless the 5153's gamma value is equal to sRGB gamma (2.2). It very likely isn't, but I couldn't find any concrete information to tell me that, and the results are close enough to what I'm actually seeing on my monitor. - The non-intensified colors are brighter (closer to the intensified ones) when compared to the "canonical" palette. But keep in mind that the measurement was done with the contrast knob in the 'full' position; dialing it down makes the non-intensified colors darker.

- The "brown fix" for color #6 reduces green to 64%, not 50%.

- The hi-intensity colors are also affected by the *count* of primary colors combined to create them (Hugo Holden's document explains why).

This mapping already looks a lot closer to reality. But does it really tell the full story? I'm not sure.

Here's a photo of the 16 colors on my 5153. I've tried to make sure that the camera settings reflect what I'm seeing as closely as possible, and to correct and white-balance the image so that gray is really gray.

Even so it's not perfect, but it gets kinda-sorta close enough:

One thing you may notice is, the contribution of each primary color to overall luminance seems to be somewhat different than expected.

For example: in my derived colormap, #2 and #3 (green and cyan) are very close in brightness. Same goes for #4 and #5 (red and magenta). But on my monitor, both of those pairs show a more noticeable difference.

It's as if blue contributes more than expected to the luminance, and/or red/green contribute less. Interestingly this doesn't have a huge effect on hue... although the magentas do appear slightly more bluish than they do in my derived palette. I think. However, anything I can come up with at this point is strictly subjective.

So if Hugo Holden is reading this, or for that matter anyone else with the right knowledge...

- Is my above mapping valid?

- Can it be fine-tuned even further, based on any published information or measurable data?

- Can I even trust what I'm seeing on my monitor, or should I just ignore all those differences and chalk them down to aging components and so on?

Last edited: