OK, got LSX in full 30kw of RAM running... (funny detail: it does not run in SIMH - IO page hardcoded!)

But I still think about it. On LSX, swap space is on the user disk. You may have noticed the /usr filesystem is somewhat smaller than / (which has 500 blocks). Behind the blocks of /usr is swap space.

Unfortunately, swap size is hardcoded in the kernel, depending on user space size. Which means, if your new kernel has larger user space, it will require a smaller /usr filesystem. I'm not happy with this because the current /usr file system with its 400 blocks hardly is enough to get the kernel compiled. Thus, I fixed swap space to the 99 blocks like in the vanilla LSX disks and can reuse /usr disks for every kernel.

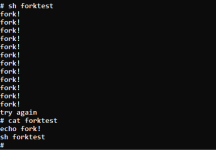

Of course this comes at a price. It might happen you run out of swap space, resulting in a kernel panic. The latter is not a big problem, just drops you in ODT at an address of about 000140 (HALT instruction). From there it is possible to reenter LSX by a warm start - do a '0G' in ODT. And, of course run a 'check' on the filesystems. Any open-for-write file probably is garbage now.

Currently I wonder if this may be sufficient for hobby usage. I tried out a 30kw LSX with minimum swap is able to rebuild its own kernel - a good sign. On the other hand, of all system programs the c1 stage of cc is the largest - taking 12kw which is the reason why this is the minimum userspace for LSX.

Process scheduling is cooperative if I don't use the BGOPTION. Which means, even if a fancy homebrewed C program allocates all RAM it can find, this never will be swapped out. After exit, it is released so we have no swapped out huge process. Thus, the 99 blocks of the vanilla LSX still should suffice.

Any comments on this? Do I miss something?