I know at least that LGR upgraded his Tandy 1000 with that Lo-Tech card.

https://youtu.be/malgCK7qHQA?t=531

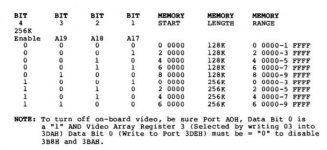

It has dip switches for each 64K page, so you'd be flipping them in pairs.

That video may have just proven immensely useful! It's high-res enough that you can see what he set the switches for, and it looks like he has all switches up on SW1 and the first two up on SW2. According to the chart on the back of the board that means he's actually got it set to provide RAM for the entirety of the 640k address space, and the machine apparently will POST like that.

640k + the built-in 128k is actually the memory mapping used by the Tandy 1000TX, so unless his Lo-Tech card is sitting on top of the built-in RAM at 8000h (which is a possibility, that kind of clash might actually be something you'd get away with) this *may* demonstrate that even the old Tandy BIOSes can grok that and program the mapping of Big Blue appropriately. It is frustrating because per this:

Knowing that the T1k searches for 128K pages helps, and it kinda sucks that you gotta flip through each manual in the series in order to figure out the commonalities.

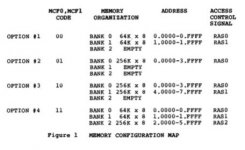

It really is a pain in the rear, because different versions of the same chart explaining the Big Blue memory mapping register appear in every manual, but none of them explain it entirely; they seem to have decided that only the settings that applied to each model *when it was introduced* are relevant, it doesn't explain every different possibility the chip can handle.

(This is why I'd love to see the Check-IT output from an EX or HX equipped with the 512k Plus board, because there are unresolved possibilities for what happens if you have expansion RAM to 7FFFF in a system with a 256k Big Blue.)

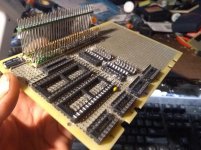

How hard would it be to dumb down your test board so you just have one 512k RAM mapped from 0h to 7FFFF? The decoding for that should be really trivial; this is a terrible idea, but in principle couldn't you just connect A0-18 straight across, use A19 as your chip enable, and essentially dispense with decoding entirely? (A19 will be low whenever the machine is in the bottom 512k, and of course MEMR and MEMW will need to be active to make the chip read or write, so... bang, no decoders needed? I feel like this is wrong but I can't quite pin down why.) I have three theories what you'll get out of this:

A: 640k! (Plus possibly some RAM sitting in the A0000 page) This from Big Blue mapping its 256k from 8000-BFFFF. This would be optimal.

B: 512k, because the Tandy won't do a 256K Big Blue at 80000h and instead exiles Big Blue to B0000 like in a fully expanded TX. (Which means you'd need to either add another 128k to get the backfill, or pull back to 384k so it'll map Big Blue to its documented 60000h position and keep double-mapping the last 32k)

C: Something less useful, but probably not something that will smoke the computer.