First, they substitued the Motorola 6845 (same chip used on the CGA) by a custom made controller named V112. Another thing to take into account is that the Hercules card already had 64 kb of RAM while a standard CGA had only 16 kb. So while 16kb of text buffer was available, while on text mode the rest of the graphic memory, not in use on this moment, could be used to load custom charsets.

Actually the HGC+ still uses a standard 6845 or compatible.

See Here. The V112 is an ASIC that compresses much of the multiplexers and latches and other gross stuff necessary to change the memory architecture as necessary for the different modes. The CRTC actually doesn't "know" anything about character generation at all other than supplying a line # output in addition to a memory address, that's all left up to external logic.

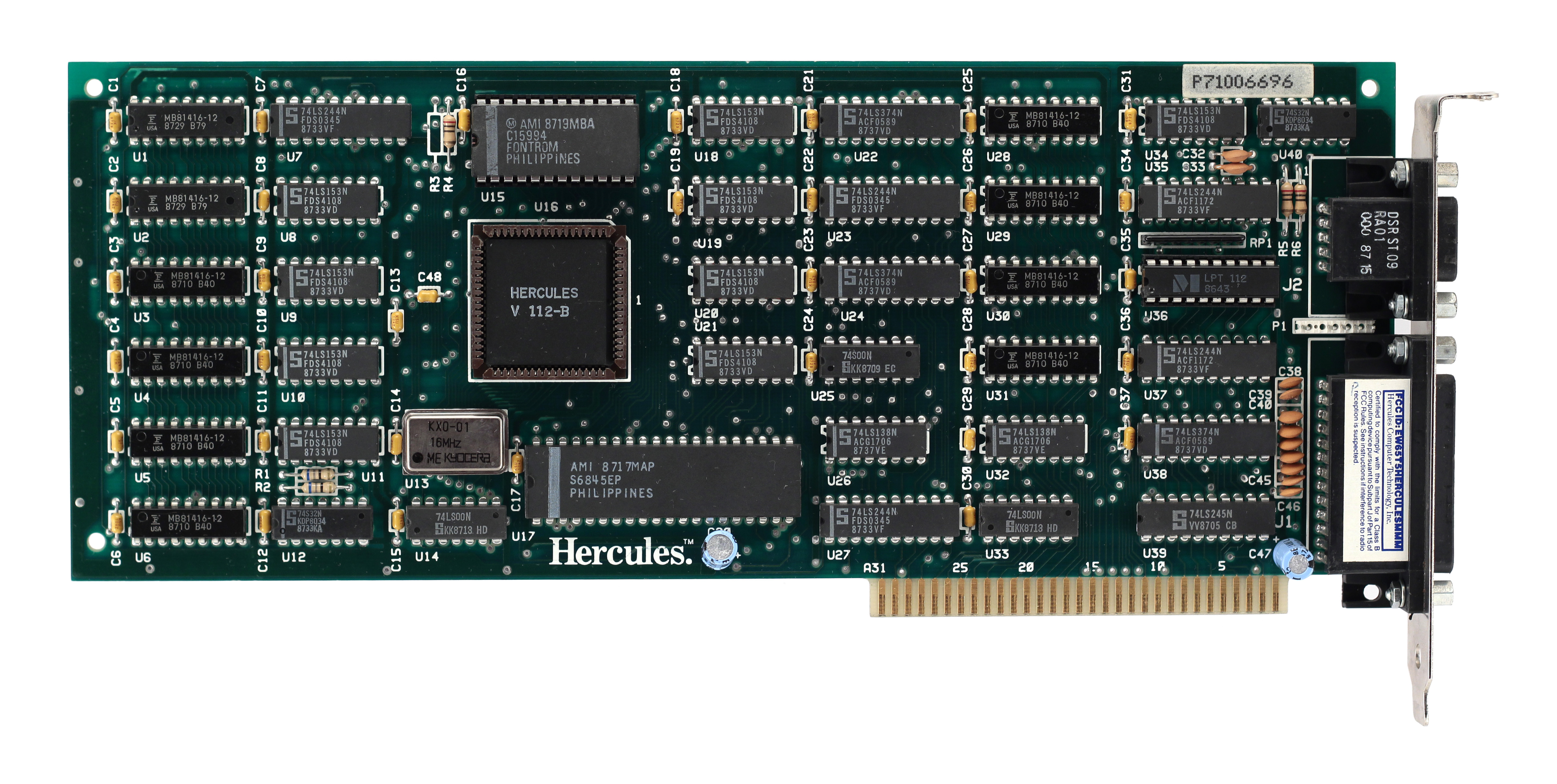

What's actually really interesting is if you look at a tight closeup of the HGC+ you'll see something interesting that I'm surprised wasn't mentioned on Scott Elliot's page:

See the chips labeled "MB81416-12"? Those are 16Kx4 DRAMs. That means two of them make a 16Kbyte memory, so for 64K you need eight of them. There aren't eight of them; there are

ten of them. If I had to hazard a guess why I think what's going on when this card is running in RAMFONT mode is it uses the four of them on the right half to create a 16 bit wide character/attribute buffer (effectively 16Kx16 to the video hardware but 32Kx8 to the computer, although only 4K of it would normally actually be used.), and the six of them on the left side are the 48K of RAM that act as the character generator memory. (48Kx8) Chopping it up like this would actually simplify things a *lot*; in text mode a normal MDA or CGA card has to make two memory fetches for each character, one for the glyph, which is latched and presented to the character generator, and one for the color/blink/whatever attributes, which are applied to the character generator output. The HGC+ lets you use 4 bits of the attribute byte to select which page of the soft-load character sets to use (effectively giving you 12 bit wide characters). This would make for a timing nightmare if it took two fetches to pull the effective address you need to apply to the address bus for the character memory. By divvying it up like this they made life way easier on themselves, at the cost of wasting some memory.

Here's a craptastic video I made about some toy video hardware I built on a breadboard that can use a single bank of memory for both the character glyphs and software defined characters. (It also does linear framebuffer bitmapped graphics, but *technically* it implements them by mapping a byte of framebuffer memory onto a 1 line tall character set because it eliminates the need to have different output hardware for the "text" and "graphics" modes.) The performance of this thing is is roughly analogous to CGA and gets away with doing the "two fetches for every character" thing with a single 8 bit memory, but if I had to add attributes to it things would start getting a little dicy, and that's with SRAM that supports 55ns access times.